Head to Head: Smart Mobile Studio vs. Embarcadero Delphi

I know I have been writing a lot about Smart Mobile Studio and memory access lately. But I’m sure you all understand that it’s just as vital in HTML5 applications as it is under native Delphi or C# applications.

V8 JIT powered JavaScript is awesome, even on embedded platforms

Having spent the better part of this weekend optimizing and re-factoring the codebase, I am now able to write 1.000.000 (one million) records to memory in 417 milliseconds. At first I did not believe that it could be possible, there had to be some error somewhere or perhaps the data was not actually written. But using hexdump and inspecting the buffer avails that yes – there is no error, it’s that bloody fast.

mChunk:=0;

mMake.Allocate(21000021);

mStart:=Now;

for x:=0 to 1000000 do

begin

mMake.Write(mChunk + 0,$AAAA0000);

mMake.WriteFloat32(mChunk + 4,19.24);

mMake.WriteFloat64(mChunk + 8,24.18);

mMake.Write(mChunk + 16,false);

mMake.write(mChunk + 17,$0000AAAA);

inc(mChunk, 21);

end;

mStop:=Now;

This means that JavaScript, in particular Webkit V8 (JIT) code is now faster than native Delphi for standard memory operations (move, fill, read, write).

Graphics humiliation

Some five years back when I proposed on my earlier blog that a compiler producing JavaScript from Object Pascal could be constructed, people laughed. One of the biggest challenges when creating something new is that you face the general consensus of people stuck in the past, fixed to rigid ideas which may or may no longer apply to reality.

This was also the case with Delphi and our community back then. To the majority of programmers at that point in time, JavaScript was deemed a toy; it was to slow, unserious and unstable for anything real and tangible. A proposal that JavaScript could in fact be used as a universal platform for mobile and desktop applications was laughed out of the chat rooms.

Introduction of new ideas is often met by heavy resistance

Thankfully a genius french developer, Eric Grange, which is the maintainer of Delphi Web Script (DWS) came to my aid. What he did was create a HTML5 canvas demo which outperformed Delphi by magnitudes. First using FireFox which is as we all know slightly slower than Webkit, but later he executed the same code in Webkit’s V8, leaving native Delphi in the dust.

So the laughter I had faced was quickly silenced. I had been vindicated and Eric and myself went on to write what to day is Smart Mobile Studio. Eric adapted Delphi Web Script to serve as the compiler framework, adding a JavaScript code-generator which not only realized my ideas; It also went far beyond anything I had envisioned, with a VMT (virtual method table), class inheritance, interfaces, operator overloading, partial classes and the works.

The moral of the story here is that we were never out to humiliate Delphi. Quite the opposite, Smart Mobile Studio was created as a toolkit for Delphi developers. In fact it was created to help Embarcadero venture into new markets. I would never suspected to be treated like we have been by Embarcadero, which has blacklisted our entire team from public events, threatening to poll funding if we are allowed to speak (!)

But it has made one thing clear. I no longer care if Embarcadero is damaged by my products. With the exception of two individuals working for EMB, they have not given me (or my team) the time of day. Which is strange, because our technology would revolutionize and to some degree re-vitalize the Delphi brand. Something it sorely needs.

Memory matters

Well, today I am pleased to announce that not only does Smart Mobile Studio humiliate Delphi in every graphics operation known to mankind, including 3D rotation of user-controls; Smart Mobile Studio is now faster in every aspect of memory manipulation. This includes:

- Writing un-typed array to memory

- Writing typed arrays to memory

- Moving data between two memory segments

- Moving data within the same memory segment

- Reversing the data inside a memory segment

- Fill a memory segment with char data

- Fill a memory segment with un-typed data

- Fill a memory segment with typed-data

The tests were all done on VMWare Fusion running on an 2.7 Ghz Intel Core i5 iMac with 8 GB memory (1600 Mhz DDR3).

1 Gigabyte of memory was allocated for the VMWare machine, which is running Windows 7 Ultimate Service Pack 2. The tests were performed using Chrome (Smart Mobile Studio ships with Chromium Embedded) and Chrome for OS X.

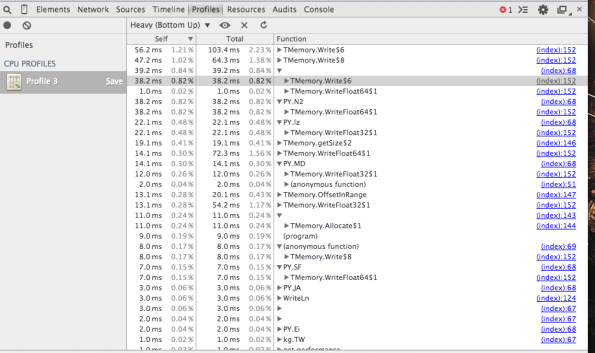

Inspection of memory and CPU timeline

The following Delphi versions were used in my tests:

- Delphi 7

- Delphi 2005

- Delphi 2006

- Delphi XE

- Delphi XE3

- Delphi XE6

- Delphi XE7

I also performed the tests using the latest FreePascal compiler, and I am happy to report that FreePascal is the only compiler capable of delivering roughly the same amount of power as V8 (the JavaScript runtime and JIT engine under webkit is called V8).

To be perfectly honest, I have begun to re-compile more and more Delphi projects using FreePascal and Lazarus these days. And we are moving Smart Mobile Studio to FreePascal as well. It gives us the benefit of compiling our product for OS X, Linux and Win32.

It also puts to rest any clause in the Embarcadero License which prevents users from producing executables from a Delphi application (yeah, it’s that screwed up!). So yes, a FreePascal powered version of Smart Mobile Studio will appear sooner or later.

Test yourself

The memory management unit, support for streams and marshaled pointers will be available in the next update of Smart Mobile Studio, so you can download a trial version if you dont own a license and run the tests yourself.

The full source-code will be posted here shortly, it’s identical to the SMS version in both payload and infrastructure.

Leave a comment Cancel reply

Recent

The vatican vault

- September 2023

- August 2023

- March 2023

- February 2023

- December 2022

- October 2022

- January 2022

- October 2021

- March 2021

- November 2020

- September 2020

- July 2020

- June 2020

- April 2020

- March 2020

- February 2020

- January 2020

- November 2019

- October 2019

- September 2019

- August 2019

- July 2019

- June 2019

- May 2019

- April 2019

- March 2019

- February 2019

- January 2019

- December 2018

- November 2018

- October 2018

- September 2018

- August 2018

- July 2018

- June 2018

- May 2018

- April 2018

- March 2018

- February 2018

- January 2018

- December 2017

- November 2017

- October 2017

- August 2017

- July 2017

- June 2017

- May 2017

- April 2017

- March 2017

- February 2017

- January 2017

- December 2016

- November 2016

- October 2016

- September 2016

- August 2016

- July 2016

- June 2016

- May 2016

- April 2016

- March 2016

- January 2016

- December 2015

- November 2015

- October 2015

- September 2015

- August 2015

- June 2015

- May 2015

- April 2015

- March 2015

- February 2015

- January 2015

- December 2014

- November 2014

- October 2014

- September 2014

- August 2014

- July 2014

- June 2014

- May 2014

- April 2014

- March 2014

- February 2014

- January 2014

- December 2013

- November 2013

- October 2013

- September 2013

- August 2013

- July 2013

- June 2013

- May 2013

- February 2013

- August 2012

- June 2012

- May 2012

- April 2012

Well done! keep going please

This is a joke. I do not have the same numbers. Wrongly coded Delphi may be slower than v8 but not well written code with pointers. And memory consumption difference is huge, apart from types arrays memory work.

I used both for memory intense tasks, and after tuning on both sides, using e.g. jsperf for SMS, the Delphi code was always faster.

Naive benchmarks with fixed constants in loops may be faster. Not useful code.

Your infrastructure layer for Delphi is probably a true bottleneck.

Joke? Thats what they said about the graphics performance earlier, until they saw the numbers and tested it themselves.

And very little bottleneck. XE7 is freshly installed on the same VMWare disk. Nothing else running while the tests are performed (separately of course).

Anyways, I did not use pointers in my test. I used var and const params, which roughly equates to the same as marshaled untyped reference. But the whole “point” was to use ordinary read/writes; Which includes Read() and Write() operations on streams as well, compared to the SMS streams. Delphi has the slowest streams mainly due to how the file-cursors is maintained. The seek ping-pong calls are just silly to watch.

Anyways, I also experimented with fixed records of the same types as under JS. And JavaScript won every single time.

Wait until you get the next upgrade, then run the test yourself if you dont believe me.

Well, numbers dont lie. Nor would I post such a boast if it was just something “made up”. I have posted pictures of the profiler after running the code, you can count the ms yourself if you like.

Then write a similar read/write routine into an untyped array of bytes in Delphi, and you will be surprised at the result.

Your numbers are certainly true.

My concern is that the test you are talking about is a “best fit” test for V8 (relying on constants to pattern the binary output), and you would never encounter this pattern in real work.

I did my own numbering and benchmarking on REAL data (huge – biblical – text UTF-8 and UTF-16 encoded in memory binaries, with no string allocation), and the JavaScript/SMS version is always slower than Delphi’s.

Also pattern compression (using LZ variants) is slower when run in V8.

And I profiled and tuned the SMS code so that the JavaScript used best patterns (using jsperf). I can assure you.

Well, one of my favorite libraries is byterage, which i wrote in order to simplify flat-file data access and also for dealing with binary files objectively rather than just a bunch of readfile/writefile or readStream/writestream calls.

So for me, working on a node/js based flat-file database from scratch, being able to write X number of records fast into memory is very “real” so to speak.

There is also graphics to consider, and there are cases where a manual “bitblt” will be better than using the putImage canvas API.

I am fully aware that there are a lot of tasks which native code does better, and probably always will. Unless we se a complete re-write of how JS works internally with more .net type JIT tech involved (and fixed types all the way). My job is basically to push borders and to see how object pascalified I can make the JS environment, so getting memory read/write under wraps together with true byte-based streams, buffers and datatype conversion which are fast and can be relied on — that is no small feat to be honest.

But I know what you mean, some topics are best handled by native code — you get no argument from me there.

I was actually as surprised as you were to see these numbers. I tested again and again — thinking it was wrong, but nope. They are correct.

I may have gotten lucky and found the one example which hits a sweet-spot, but I also did a test with streams with a different and more complex write structure, and it’s just as fast. I dont know how they did it, but webkit really is one hell of an engine.